TL;DR

AI is not just models and benchmarks, it’s human experience. No matter how powerful a model is, people interact with it through interfaces, workflows, and trust. User experience (UX), accessibility, and governance are just as critical as data, model architecture, and parameters.

Let’s explore why UX is the real determinant of adoption, how accessibility is a form of governance, and why the future of AI depends on hybrid builders who can bridge research, product, policy, and people.

AI Isn’t Just Math, It’s Experience

Every few weeks, headlines celebrate another AI milestone:

- “X% better on MMLU!”

- “Y% more efficient transformer architecture!”

- “Z trillion tokens used in pretraining!”

It’s exciting. It’s impressive. But here’s the truth: users don’t experience benchmarks, they experience products.

A parent asking an AI to summarize their child’s IEP plan doesn’t care about its ImageNet score. A doctor using an AI-powered assistant doesn’t care whether it’s autoregressive or a diffusion model. What matters is clarity, trust, and usability.

And that’s where user experience (UX) comes in. And without thoughtful design, AI will remain an elite tool for technologists instead of a transformative force for everyone.

Models Are Infrastructure, Not Products

At its core, a model is just math: a giant set of weights and vectors that transform input into output. Without an application layer (or UI), it’s inert.

Think of it like this:

- A printing press without books: the press is an engineering marvel, but useless without the stories it prints.

- A car engine without a vehicle: incredible horsepower, but it’s not going to go anywhere.

- A model without UX: powerful, but inaccessible.

This is why models like GPT-4 became famous only when wrapped in ChatGPT, a product with an intuitive interface.

The press is the model; the books are the user experience.

The Benchmark vs. Reality Divide

Researchers love benchmarks. Academic papers celebrate small improvements, “We improved ImageNet classification accuracy by 1.2%!” But out in the world, no one cares. Users don’t ask, “What’s your F1 score?”

They ask:

- “Does this tool actually help me do my job?”

- “Can I use it if I’m blind or dyslexic?”

- “Can I trust the answer enough to act on it?”

A 1% gain on MMLU doesn’t mean a child can use your homework helper without confusion. A new architecture doesn’t mean a teacher feels confident relying on it.

However, a product that’s 15% more usable, trustworthy, and accessible could mean adoption by millions.

Why UX Determines Adoption

Case Study 1: Google Translate

In its early days, Google Translate was like me when I was first learning German, clunky and literal. The translations were often technically correct but functionally useless.

Adoption skyrocketed only after UX improvements:

- Auto-detect languages.

- Inline suggestions.

- Mobile apps with instant camera translation.

The model didn’t change much. The experience did.

Case Study 2: Midjourney on Discord

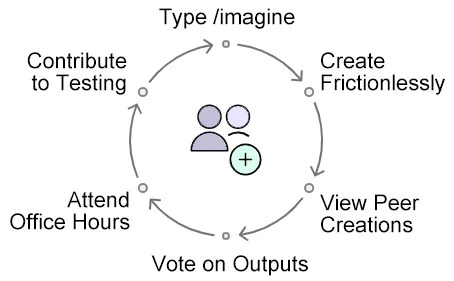

Midjourney’s breakthrough wasn’t just image quality, it was distribution. By launching inside Discord, a platform millions already used, they made experimentation frictionless.

- You type /imagine.

- You onboard and start creating without onboarding friction.

- You instantly see your peers’ creations.

- You upvote or downvote outputs.

- And perhaps quite critically, they hold open office hours weekly and crowd source testing and new models.

The community-driven UX turned experimentation into engagement, engagement into data, and data into model improvement.

UX as Governance

Here’s my hot take: UX is governance.

- A consent checkbox is a governance mechanism.

- A “Why did I get this result?” button is transparency.

- A clear “stop generating” option is safety.

We often treat governance as policy documents written in conference rooms. Laws like ADA and Section 508 already force compliance for many digital systems (though most companies don’t know enough about accessibility to put these regulations into practice effectively).

As AI enters government and healthcare, the stakes only rise.

I’ve watched a blind user try to navigate an AI chatbot with a screen reader. The unlabeled (low contrast) keyboard -not-friendly buttons weren’t just bad UX, they were exclusion by design.

That’s governance failure at the interface level.

Bad UX = bad governance.

Governance doesn’t just happen in legislative chambers. It happens in buttons, menus, error messages, and accessibility features.

If a disabled person can’t use your interface, you’ve excluded them from innovation. If your chatbot buries disclaimers, you’ve undermined trust.

The UX Skillset Frontier AI Companies Need

AI labs are discovering they need hybrid builders, you know, those unicorns everyone talks about, people who can bridge research and reality.

You may have heard of this being called “forward deployed research.” This type of research thrives on people who:

- Prototype messy solutions with models.

- Test them in the wild with real users.

- Distill feedback into design improvements.

- Collapse hacks into scalable, reliable systems.

Don’t mistake this for MVP design and development. This is applied UX research at the frontier.

What skills does this demand?

- Rapid prototyping: not perfect code, but quick experiments.

- Accessibility testing: ensuring inclusivity is baked-in early.

- Communication: translating research constraints into design solutions.

- Feedback mapping: turning user frustration into system improvement.

In other words, UX at AI labs is less about pixel-perfect Figma files and more about designing in chaos. (But still designing.)

For UX professionals in AI, this is the playbook:

- Don’t aim for perfect. Aim for useful enough to learn something.

- Get prototypes in front of diverse users, especially edge cases.

- Document frustrations, accessibility gaps, and moments of delight.

- Feed that back into both design and model improvement.

UX professionals who can work as forward deployed researchers will be invaluable hires at AI labs.

What This Means for AI Teams and Companies

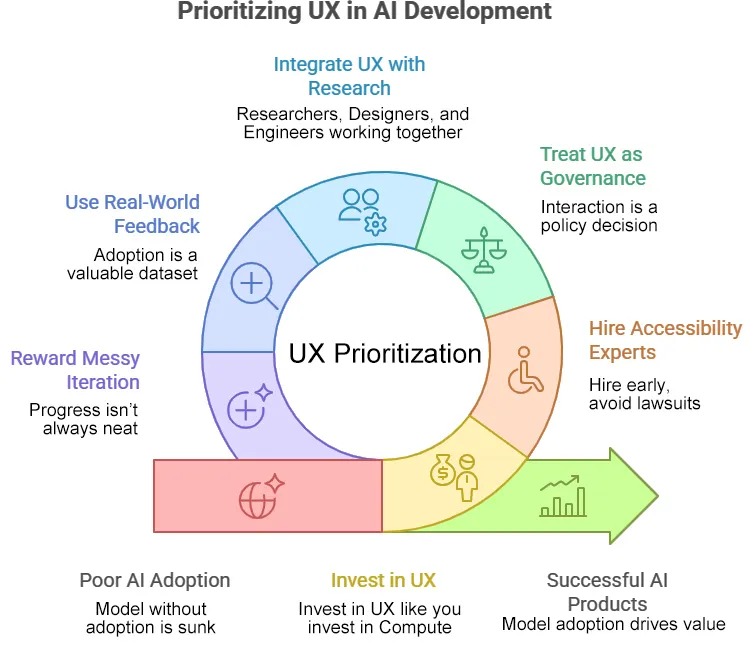

If you’re building AI products:

- Invest in UX like you invest in compute. A model without adoption is a sunk cost.

- Hire accessibility experts early. Don’t bolt them on after lawsuits.

- Treat UX as governance. Every interaction is a policy decision made tangible.

If you’re a research lab:

- Don’t silo UX from research. Let designers prototype alongside engineers.

- Use real-world feedback as training data. Adoption is a dataset, not a byproduct.

- Reward messy iteration. Progress doesn’t always look like neat graphs or refined PowerPoints or KeyNotes.

Where Models Meet People

The AI world is obsessed with scale: bigger datasets, bigger compute clusters, bigger models. But the true measure is whether real people, in all their diversity, can use AI tools safely, joyfully, and effectively.

If we want AI to scale responsibly, we must design not just for power, but for trust, inclusion, and delight.

The future of AI will be defined not only by who can train the biggest models, but by who can design the best human experiences around them.

—

This is the first in a 12-part series on UX, accessibility, and governance in AI. Next up: “Designing AI for Everyone.”

I love AI and I use it to rein in some of my thoughts because I’m a bit of an over-communicator (I can hear some of you nodding). That said, I don’t use AI to write for me, so there may be some mistakes, poor grammar, and a few run on sentences.

References

WCAG in Plain English

https://aaardvarkaccessibility.com/wcag-plain-english/

OpenAI’s GPT-4 System Card (aka how we trained the model)

https://openai.com/index/gpt-5-system-card/